- Drift monitoring captures a baseline of 13 SEO-critical fields and diffs every later snapshot against it

- 17 comparison rules across CRITICAL, WARNING, and INFO tiers detect regressions like flipped canonicals, added noindex, removed schema

- SQLite-backed, local-first: state lives in

.seo-drift.dbin your project, no SaaS dashboard - CI-ready:

compareexits non-zero on critical drift, fails the build, ships only when clean - Contributed by Dan Colta via the Pro Hub Challenge, integrated and security-hardened in Claude SEO v1.9.0

Three months after a deploy, you notice rankings are down. You dig and find the canonical tags got flipped during a refactor. The fix takes 5 minutes. Recovery takes 3 months. Drift monitoring catches that at deploy time.

The Silent Killer of Organic Traffic

On-page SEO regressions are invisible at deploy time. Title tags get truncated by CMS migrations. Meta descriptions get stripped by security middleware. Schema blocks vanish during refactors. Hreflang return tags break when translations get republished. Each individual change is small. Together they erode rankings, and you only notice in Search Console weeks later when the line is already trending the wrong way.

The traditional SEO toolkit catches none of this in real time. Crawlers run on a schedule and surface issues days late. Rank trackers measure outcomes, not causes. Search Console reports lag the live state by 2 to 5 days. By the time the dashboard turns red, the damage is already compounding. The deploy that caused the regression is usually two or three releases back, the engineer who touched the affected template has moved on to other work, and the root cause investigation eats a week of senior time.

Drift monitoring closes the loop. It treats your live HTML the way Git treats your source code: baseline a known good state, diff against current, surface every meaningful change. The pattern is borrowed from software engineering for a reason. Engineers caught this problem 20 years ago with version control, linters, and CI. SEO is just late to the party.

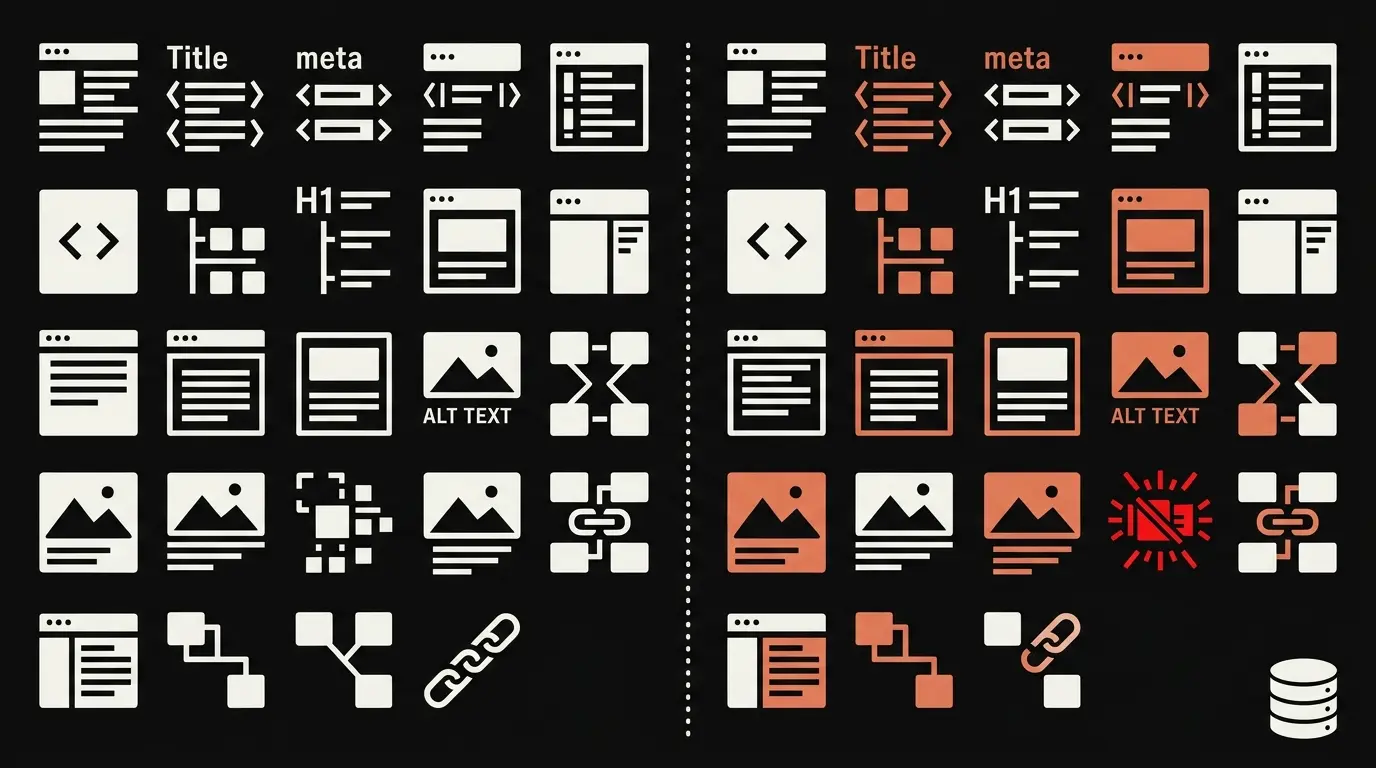

What Drift Monitoring Actually Checks

Every baseline captures 13 SEO-critical fields: title, meta description, canonical URL, robots meta, H1 array, H2 array, H3 array, JSON-LD schema array, Open Graph dictionary, Core Web Vitals (LCP, INP, CLS, performance score), HTTP status code, full HTML SHA-256 hash, and schema content SHA-256 hash. Comparison runs 17 rules across three severity tiers.

| Rule | Severity | Why it matters |

|---|---|---|

| Canonical URL changed | CRITICAL | Wrong canonical can deindex pages |

| Canonical URL removed | CRITICAL | Google guesses, often wrong for query params |

| Robots meta noindex added | CRITICAL | Removes pages from the index within days |

| Title tag removed | CRITICAL | Page loses primary ranking signal |

| H1 tag removed | CRITICAL | Loses primary topic signal |

| Schema JSON-LD removed | CRITICAL | Rich results disappear within hours |

| HTTP status changed to error | CRITICAL | Rankings drop within days |

| H1 text changed significantly | CRITICAL | May shift topical relevance |

| Title text changed | WARNING | Snippet display and CTR affected |

| Meta description changed | WARNING | Snippet display affected |

| Core Web Vitals regressed >20% | WARNING | Page experience signal weakens |

| Lighthouse score dropped 10+ points | WARNING | Bottleneck investigation needed |

| Schema JSON-LD content modified | WARNING | Type or property changes risk validation |

| Open Graph tags removed | WARNING | Social previews break |

| New schema JSON-LD added | INFO | Positive, but validate the new block |

| H2 structure changed | INFO | Review topical alignment |

| HTML content hash changed | INFO | Catch-all for any body content drift |

The severity tier sets the response window. CRITICAL means immediate fix. WARNING means investigate within a week. INFO is for awareness only and is often intentional (a new schema block, a content edit, a planned heading restructure). The classification keeps noise low so the signal stays loud.

Why SQLite, Why Local

Drift state lives in .seo-drift.db, a SQLite file in your project root. Two tables: baselines stores every snapshot with timestamp and label, comparisons stores every diff result with triggered rules and severities. URL normalization runs on every write so https://Example.com/page/ and https://example.com/page?utm_source=x match the same baseline.

Local-first by design. Your SEO state is yours, not a SaaS dashboard's. No vendor lock-in, no monthly seat fees, no data shipped to a third party. The database is portable. You can copy it between machines, commit it to a private repo, back it up to S3, or throw it away and start fresh.

Reproducible by construction. A baseline labeled pre-release-v2.1 is a deterministic artifact. You can commit the database alongside your code if you want a permanent record. Three years from now you can still run a compare against that baseline and see exactly what changed since the v2.1 release.

CI/CD Integration

This is the killer use case. Wire /seo drift baseline into your pre-deploy hook and /seo drift compare into your post-deploy hook. The compare command exits non-zero when critical drift triggers. Non-zero fails the build. Build fails, ship is blocked. No critical drift, the build passes and the deploy completes.

# Pre-deploy (in your CI) /seo drift baseline https://staging.example.com --label pre-deploy-$(git rev-parse --short HEAD) # Post-deploy (after live) /seo drift compare https://example.com --against pre-deploy-$(git rev-parse --short HEAD) || exit 1

The --against flag references the prior baseline by label. Including the git SHA in the label means each baseline is reproducible against a specific commit. Three weeks later, when someone asks "what changed between the v2.1 deploy and now," the answer is one command away.

The exit code contract is the whole point. CI treats drift like a failing test. A flipped canonical, a stripped meta description, a vanished schema block: all of them break the build the same way a unit test failure breaks the build. Ship discipline that already works for code gets extended to SEO.

How History Works

Every baseline is timestamped and optionally labeled. Run /seo drift history <url> to list every snapshot for that URL with the drift between consecutive captures. Useful for "what changed when we deployed v2.1?" investigations, "did anything break during the CMS migration?" forensics, or "show me everything that drifted in the last 30 days" audits.

The comparison results are stored too. You do not just see that the title changed, you see exactly what the old title was, what the new title is, which rule fired, and what severity it raised. Every diff is queryable. The SQLite file is a full audit trail.

This is the part that pays back over years, not weeks. The first time a senior engineer asks "did we ever change the canonical strategy on the pricing page," the answer used to be a two-hour spelunking session through Git history and Search Console. With drift history, it is a single query. The institutional memory lives in the database, not in someone's head.

Community Contribution

Dan Colta contributed the seo-drift skill via the Pro Hub Challenge in the AI Marketing Hub Pro community. The original lives at github.com/dancolta/seo-drift-monitor and was integrated into Claude SEO v1.9.0 with permission.

During integration the implementation was security-hardened. The original used curl via subprocess for HTTP fetching, which was replaced with the validated fetch_page.py pipeline that enforces SSRF protections (blocks private IPs, loopback, reserved ranges, GCP metadata endpoints). All SQLite queries were converted to parameterized placeholders. TLS verification is mandatory everywhere. The skill ships with the same security posture as the rest of Claude SEO.

Pair With the Rest of Claude SEO

Drift catches deploy-time regressions. Audit catches systemic issues. Together: ship with confidence.

- /seo audit for periodic full-stack analysis across 9 specialized subagents

- /seo technical for crawlability and indexability monitoring

- /seo schema for structured-data detection and validation

- /seo drift for the deploy-time guardrail itself

The cross-skill integration is wired in. When drift detects a schema removal, it recommends /seo schema for full validation. When CWV regresses, it points at /seo technical. When the title changes, it suggests /seo page. Drift is the alarm, the specialized skills are the diagnostic kit.

Start Now

The setup is five minutes. The payoff is every deploy from here on.

- Install Claude SEO from the install guide

- Capture your first baseline:

/seo drift baseline https://your-site.com --label initial - Make a change, deploy

- Run

/seo drift compare https://your-site.com --against initial - Wire

/seo drift compareinto CI as you trust it

Start with one critical page. The homepage, the highest-traffic article, the money page. Get a baseline, run a few compares, see what the output looks like. Once the signal-to-noise feels right, expand to the rest of the site and add the CI hook.

The Bottom Line

SEO regressions are the silent killer of organic traffic. They show up weeks late, after the rankings have already moved, and the root cause is usually a 5-minute fix that should have been caught at deploy time. Drift monitoring makes regressions loud at deploy time. That is the whole pitch. Treat your live HTML the way you treat your source code, and ship like you mean it.