- 4 new skills built by community contributors: semantic clustering, SXO, drift monitoring, e-commerce SEO

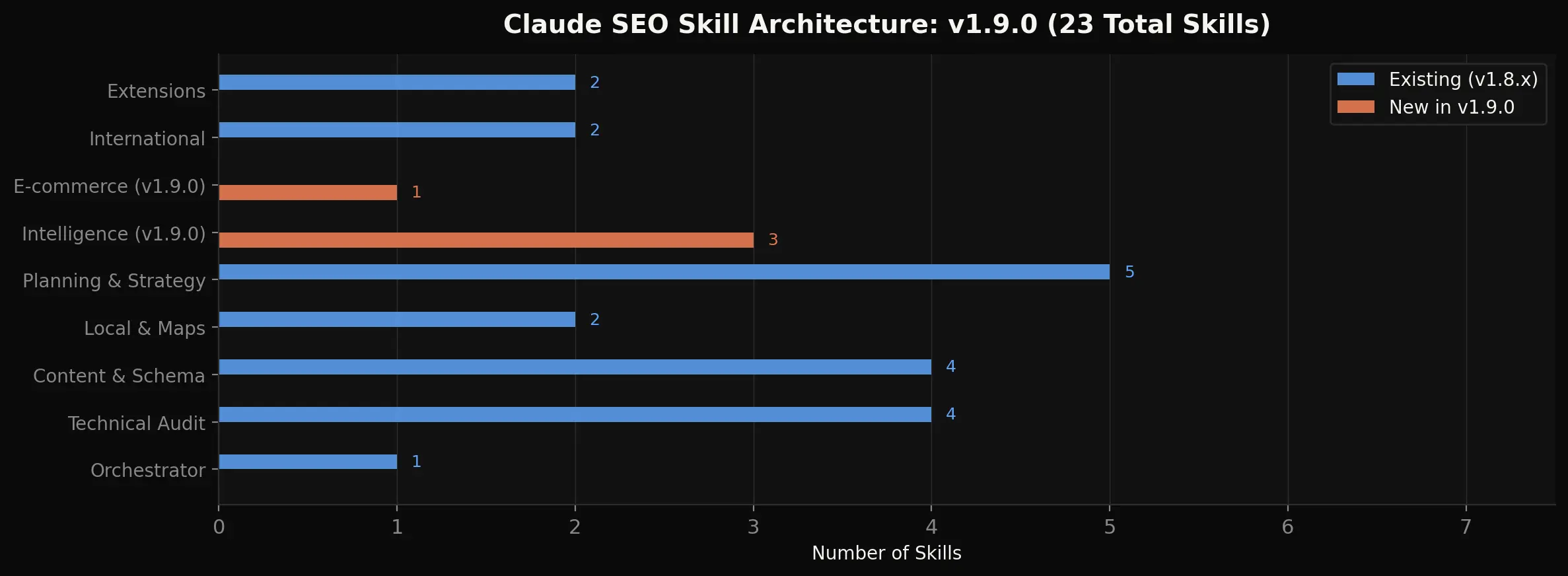

- 23 total skills, 17 subagents, 30 scripts - up from 19/13/23 in v1.8.x

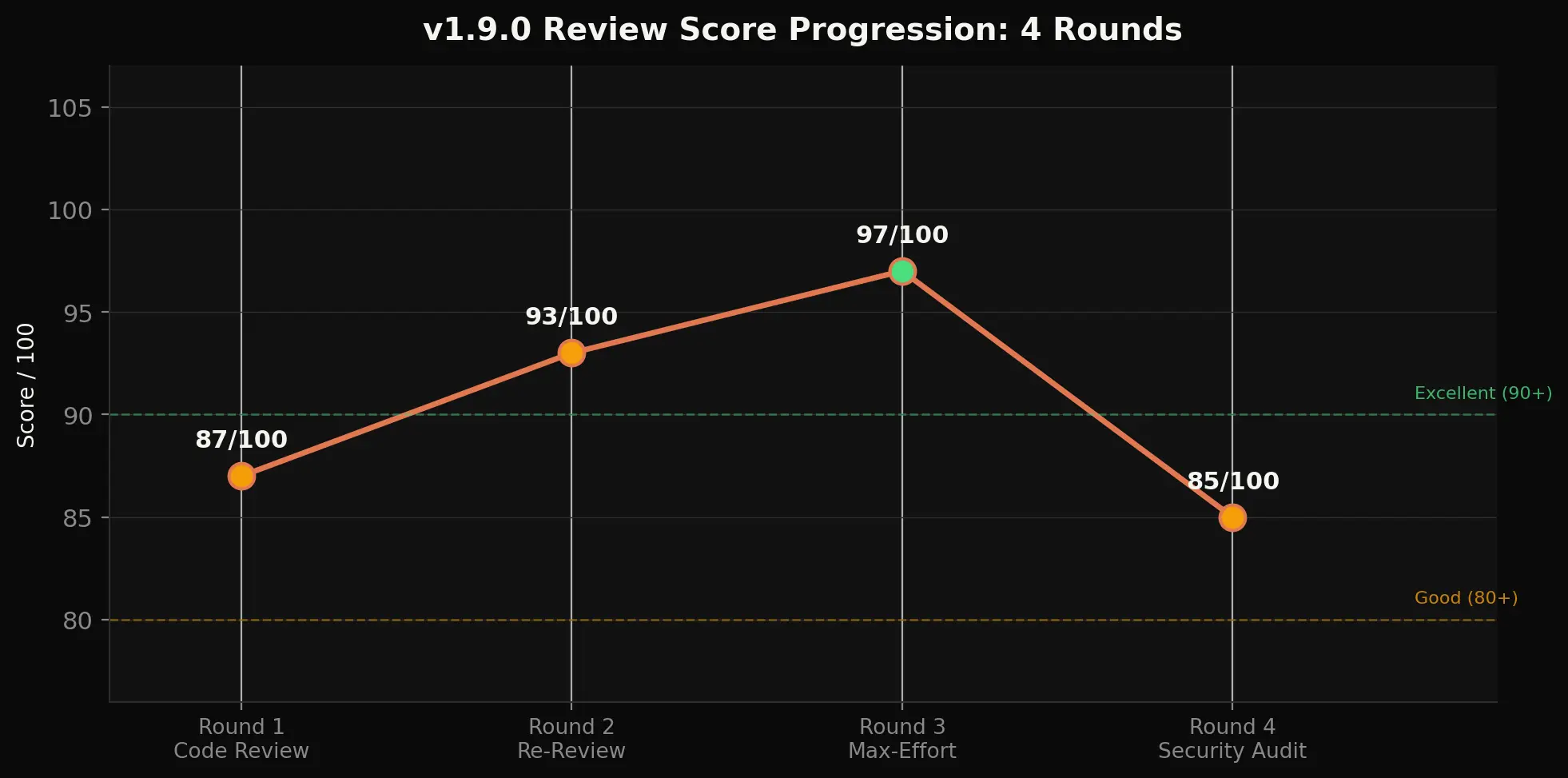

- Security score: 85/100 after a 4-round review process (87, 93, 97, 85)

- Free forever - all new features work without API keys, MIT licensed

What Changed in v1.9.0

68 files changed, 9,662 lines added. This is the largest Claude SEO release to date, and the first built primarily by community contributors rather than a single maintainer. Every previous version was solo work. v1.9.0 changes that.

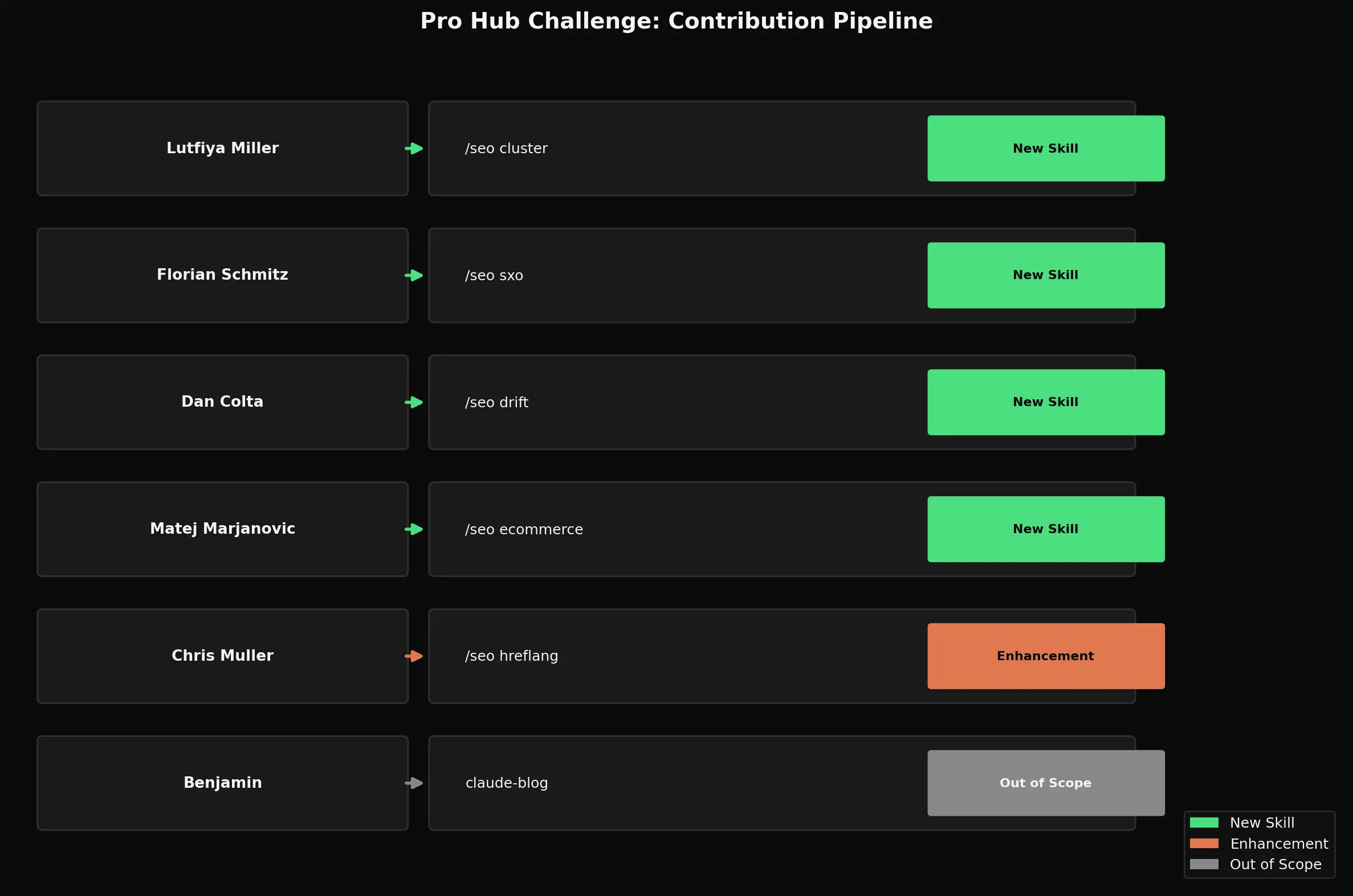

The AI Marketing Hub Pro Skool community ran its first Pro Hub Challenge in March 2026. The premise was simple: build a Claude Code skill that extends claude-seo, submit it on GitHub, and the best submissions get integrated into the main tool and win Claude Credits from a $600 prize pool.

6 people submitted. 5 scored Proficient or above across 4 rounds of review. Each submission went through code review, re-review, max-effort analysis, and a full security audit. The result: 4 entirely new skills, 1 major enhancement, 7 new Python scripts, 4 new subagents, and 9 new reference files.

| Metric | v1.8.x | v1.9.0 |

|---|---|---|

| Skills | 19 | 23 |

| Subagents | 13 | 17 |

| Scripts | 23 | 30 |

| Reference files | 18 | 27 |

| Contributors | 1 | 6 |

The 4 New Skills

seo-cluster - Semantic Topic Clustering

Community contributor: Lutfiya Miller

Most keyword clustering tools cost $50 to $200 per month. Ahrefs charges $99/mo for basic keyword grouping. Semrush is $117/mo. The /seo cluster skill does it for free using SERP overlap analysis.

The approach is straightforward: search Google for your target keywords and compare which URLs appear across multiple result pages. Keywords sharing 3+ overlapping URLs belong to the same topic cluster. If "content marketing strategy" and "content marketing plan" both return the same 4 URLs in the top 10, they belong together.

The output is a hub-spoke content architecture: pillar pages at the center, supporting pages around them, and an interactive SVG visualization you can open in any browser. The skill includes 3 reference files covering the SERP overlap methodology, hub-spoke architecture patterns, and the execution workflow. No Ahrefs, no Semrush, no API keys.

/seo cluster "content marketing strategy"

seo-sxo - Search Experience Optimization

Community contributor: Florian Schmitz

SXO bridges the gap between ranking and converting. You can rank #3 and still get zero clicks if your page type does not match what users expect. The /seo sxo skill reads SERPs backwards - it starts from what users actually click, then checks if your page matches that intent type.

If Google shows product pages for your keyword but yours is an informational blog post, you have a page-type mismatch. The skill detects this automatically using a page-type taxonomy (landing page, blog, product, category, tool, comparison, FAQ). It also scores pages against 3 user personas - researcher, buyer, and browser - and flags gaps where your content ranks but fails to satisfy the search intent behind the query.

Reference files include a page-type taxonomy, user story framework for intent mapping, persona scoring criteria, and wireframe templates for each page type.

/seo sxo https://example.com/pricing

seo-drift - SEO Change Monitoring

Community contributor: Dan Colta

Think of it as git for SEO. The /seo drift skill captures a baseline snapshot of any page's SEO state - title, meta description, headings, schema, word count, internal links, canonical URL, robots directives - and stores it in a local SQLite database.

Run compare days or weeks later and it diffs the current state against the baseline using 17 comparison rules across 3 severity levels (critical, warning, info). Title changed? Critical. Word count dropped by 40%? Warning. Meta description shortened? Info.

This catches unintentional SEO regressions after CMS updates, content rewrites, or developer deployments. The HTML diff report highlights exactly what changed and whether it matters. The history command shows drift trends over time, so you can spot patterns like gradual content thinning or schema degradation.

The implementation uses 4 Python scripts: drift_baseline.py, drift_compare.py, drift_report.py, and drift_history.py. All data stays local in SQLite - no cloud dependencies, no third-party APIs.

/seo drift baseline https://example.com /seo drift compare https://example.com /seo drift history https://example.com

seo-ecommerce - E-commerce SEO

Community contributor: Matej Marjanovic

Product pages have unique SEO requirements that generic audit tools miss: Product schema with price, availability, and review markup. Merchant feed optimization. Structured data that passes Google's rich results validator.

The /seo ecommerce skill handles all of it. It generates correct Product JSON-LD with required and recommended properties, validates against Google's Merchant Center requirements, and flags common mistakes like missing gtin or brand fields. If you have the DataForSEO extension installed, it also pulls Google Shopping and Amazon marketplace intelligence - competitor pricing, product listing ads, and marketplace position data via 3 new scripts: dataforseo_costs.py, dataforseo_merchant.py, and dataforseo_normalize.py.

The DataForSEO integration includes cost guardrails that were a significant security focus of this release (more on that below).

/seo ecommerce https://example.com/product/blue-widget

Enhanced: International SEO Cultural Profiles

Community contributor: Chris Muller

The existing /seo hreflang skill already validated language tags and detected common mistakes. Chris Muller added cultural adaptation profiles for 4 regions: DACH (Germany, Austria, Switzerland), Francophone, Hispanic, and Japanese markets.

Each profile includes locale-specific formatting rules (date formats, number separators, currency placement), content parity checks, and cultural considerations that go beyond simple translation. The skill now validates not just whether your hreflang tags are correct, but whether your content is actually adapted for the target culture.

Quality and Security

Every submission went through 4 review rounds. The aggregate score started at 87/100, peaked at 97/100 after the max-effort review, and settled at 85/100 after the security audit surfaced pre-existing issues across the entire codebase.

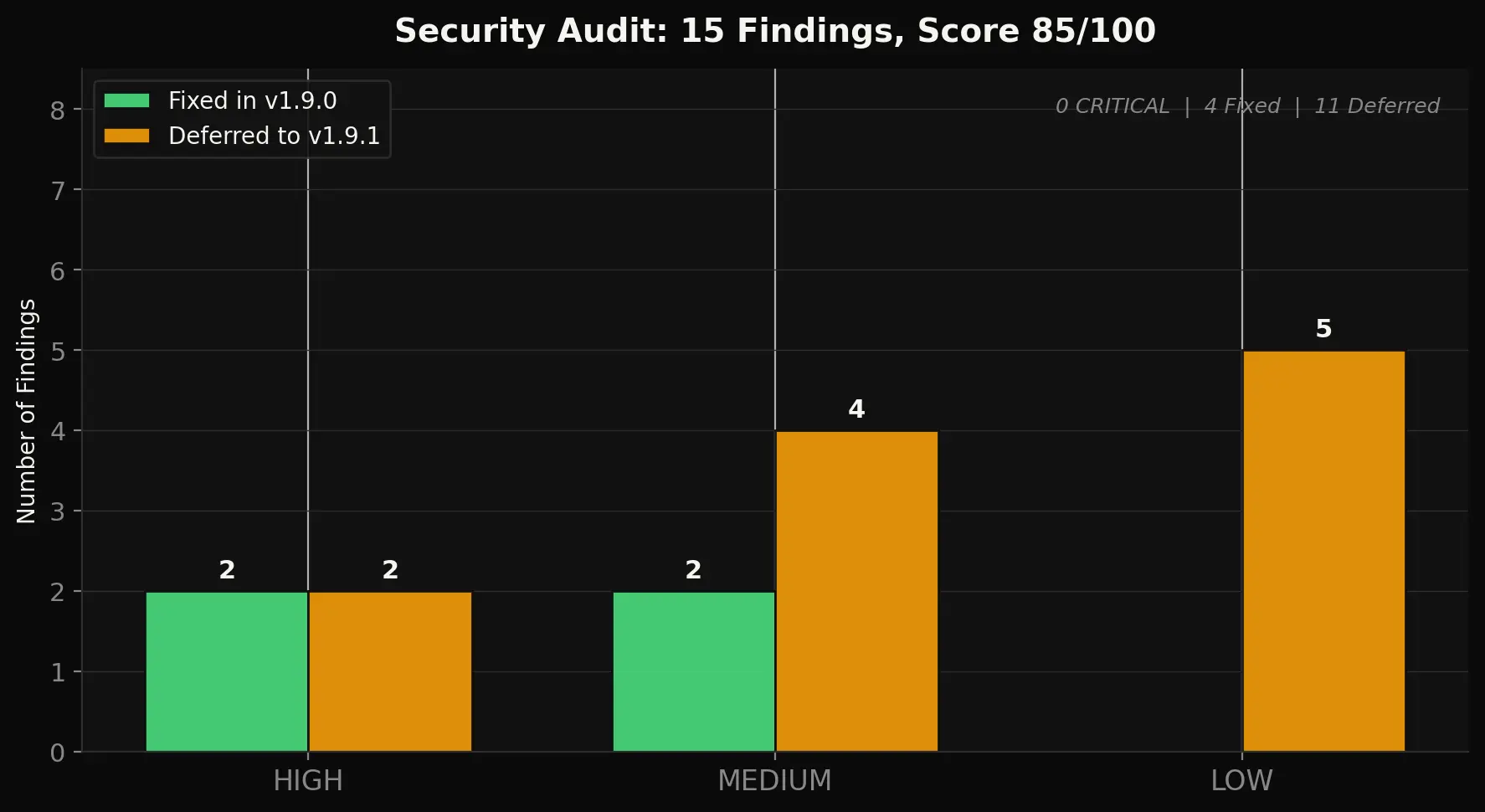

The security audit found 15 findings total. 4 were fixed in v1.9.0:

- Cost guardrail bypass (HIGH) - Unknown DataForSEO endpoints defaulted to $0, skipping the approval gate. Fixed: unknown endpoints now require explicit approval.

- XSS in cluster-map.html (MEDIUM) - Cluster labels rendered as raw HTML. Fixed: all labels wrapped in

escapeHtml(). - File locking (MEDIUM) - The cost ledger had no concurrency protection. Fixed:

fcntllocking on all writes. - CI coverage (LOW) - 7 new scripts were missing from the validation matrix. Fixed: all added to GitHub Actions.

11 findings were deferred to v1.9.1. All were pre-existing issues, formally documented for the first time by this audit.

How to Install or Upgrade

One command. No API keys required for the new skills.

claude /install github:AgriciDaniel/claude-seo

All 23 skills, 17 subagents, and 30 scripts are included. MIT licensed, free forever, no paid tiers. The optional DataForSEO extension adds live SERP data if you want it, but everything in v1.9.0 works without it.

If you are upgrading from v1.8.x, the install command handles it automatically. Your existing configuration and any saved drift baselines will be preserved.

What Comes Next

v1.9.1 will address the 11 deferred security findings: DNS rebinding protection in validate_url(), install script input sanitization, and OAuth token file permissions.

Pro Hub Challenge v2 is live now. The keyword is LEADS - anything touching lead generation. Skills, n8n workflows, MCP servers, scrapers, dashboards, pipelines. $600 in Claude Credits for the top two submissions. Deadline: April 28, 2026. Details in the AI Marketing Hub Pro community.

Full release notes and the PDF report are on the GitHub release page.